It was nice to attend SIGGRAPH 2022 in person after two long virtual years. A 4-day conference meant that one gets extra time to recover from jetlag, check out the city or even plan a short trip. Shorter technical paper talks presented a quick overview of many hot topics in research. A plus point of the hybrid conference is that one can always go back and listen to the pre-recorded longer talks for more details.

Something that often popped up in talks, be it from NVIDIA, Pixar or Autodesk, was the rise of USD and its adoption in production pipelines.

USD stands for Universal Scene Description, a file format that describes a scene graph. It is not just limited to geometry or materials, but rather can contain anything, be it lights, cameras, animation, shaders or any other typical necessities in a production environment. In addition, it provides capabilities to represent advanced concepts such as multiple variants of a product, in an elegant way.

In short, USD is a rich common language and format for packing up, assembling and editing your scene, and serves as a clean and robust exchange format to describe the work from different production departments without getting in each other’s way.

One can refer to other files and compose files from multiple sub-scenes – a bit similar to how layers work in Photoshop. This makes collaboration very predictable and in turn allows artists to focus on being creative, instead of having to focus on data management. Because of this powerful concept of linkage, one can create and access more complex worlds while loading and visualizing them in ways which would have been impossible with other, less capable formats.

Until last year it was commonly heard that the VFX and animation industry is widely adopting Pixar’s USD format to describe and manipulate scene information throughout production of CG content. SIGGRAPH 2021 even had a complete session introducing several tools encouraging USD’s adoption. For example, Conduit – a USD-centric layer on top of next generation pipeline framework from Blue Sky Studios. Or Pixar’s Digital Backlot – a set of tools to resurrect more than 33,000 previously unusable set and prop models from old films as a studio-wide resource.

Finally, this year we had Disney’s “Encanto”: the first feature film using the new USD-based pipeline completely, from beginning to end. On top of that, the entire movie was done while working remotely, which was yet another reason to make collaboration between artists as easy as possible. The team did a great job considering numerous issues it had to tackle: Animation curves, authoring, interactive performance, multi-shot setup ability for stereo, set variants/environments, and more.

Autodesk has demonstrated compiling USD for the browser, raising the possibility of viewing USD data natively in Chrome or Firefox.

Jensen Huang (at NVIDIA’s Special address at SIGGRAPH) is betting on USD becoming the language of the future and the Metaverse, with NVIDIA already working on expanding USD’s capabilities beyond visual effects and into industries like engineering, architecture, and manufacturing.

At the same time, glTF is becoming more and more a de-facto standard for asset transmission (as the name already implies – “gl (Graphics Library) Transmission Format”). The relationship between those two formats has been already elaborated on by Khronos, where companies such as NVIDIA, Autodesk and DGG as well are all members and contributors. You can check one of their recent presentations on the future of glTF. Connected with this, joint efforts are being made as part of the Metaverse Standards Forum bringing together tool providers and other key players in the ecosystem to sync on glTF, USD and related standards.

Finally, there is another draft format entering the stage: glXF is adding capabilities of scene composition to glTF. Scenes and behaviors from multiple glTF assets can be composited, using a JSON-formatted node hierarchy. The aim, however, is by no means to replace USD. Instead, glXF is aiming at efficiency in transmission/delivery use cases, such as re-use of assets, personalization, and optimization of loading procedures (spatial and temporal).

Let’s wait and watch how the long-term goals of real-time proceduralism, real-time streaming, and compatibility with web browsers unravel.

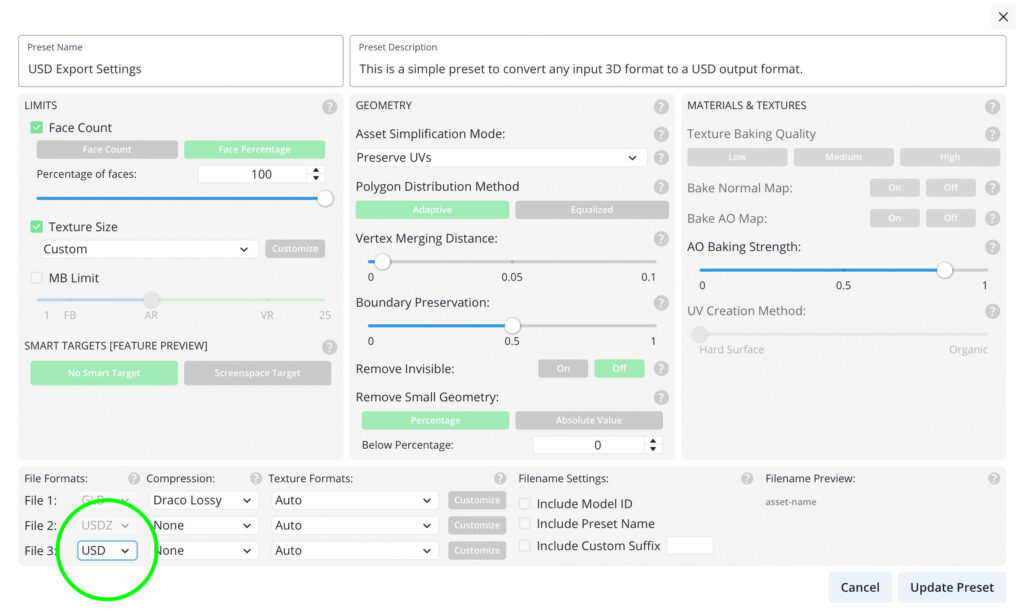

We, at RapidCompact have been supporting glTF since inception and have had support for exporting USDZ files with the subset of features supported by Apple devices for ARKit for a while now. A few releases ago we also started support for importing USD and USDZ files and have been continuously expanding it for more complex interchange USD files. In the near future, one can expect improved support for instancing, filtering for purpose, automatic orientation conversions and better support for complex mesh indexing.

Feel free to try our our latest release. We would be really happy to hear your feedback.

Try out RapidCompact with the Free plan.

If you have not been at SIGGRAPH, you can still check out how DGG’s booth looked like, or see what new features and tech we presented.

Upload and process 3D models with the free web demo or get in touch if you have any question. We´re happy to help…

More about RapidCompactTry RapidCompact for FreeEnterprise Solutions